Computer science is wildly popular at Universities right now, owing in no small part to the robust job market for CS graduates. This market is driven by the voracious appetite of businesses and the public for “tech.” A consequence of increasing CS popularity is the substantial growth of CS course sizes. Such growth presents a significant challenge to instructors of those courses, as the number of CS instructional staff have generally not scaled linearly with the number of students. How can we teach many more students with roughly the same number of instructors but without a (substantial) reduction in overall quality? One part of my answer to this question is: “clicker” quizzes.

I recently blogged about CMSC 330, the undergraduate programming languages course I (co-)teach at the University of Maryland. That post focused on the content of the course. This post considers the more general question of how to deliver a computer science course at scale, focusing on the lecture component and a key piece of my approach: the use of clicker quizzes during class. I’ll consider other aspects of large course management — student Q&A and grading — in a future post.

CS in College: The Landscape

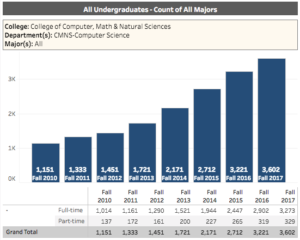

Interest and enrollment in computer science are surging. The Computing Research Association (CRA) has documented the situation quite well in a recent report. UMD CS’s enrollment has tripled in the last 8 years, from 1100+ majors in 2010 to 3600+ in 2017.[ref]I obtained these statistics from UMD’s public reports site.[/ref] This makes it one of the largest programs in the US.

In such a large department, delivering a course required of every CS major presents a challenge of scale. During Spring’18, CMSC 330 had 450+ students in four separate lectures delivered by Anwar Mamat (200ish students), Niki Vazou and Tom Gilray (100ish students), and myself (140ish students). Supporting us were 31 teaching assistants, all but two of whom were undergraduates who had previously excelled in the course. These TAs taught discussion sections, helped put together and grade projects and exams, held office hours, and answered on-line questions. There is no textbook for 330, so the lecture notes serve as the de-facto text, supplemented with some on-line materials.

Our efforts aimed to address scale can be broken down into those that deal with lecture, student-staff Q&A, project and exam grading. In this post I’ll focus on lectures, and in a later one I’ll consider the latter two.

Dealing with Large Lectures

Large lectures (probably anything with more than 40 or so students) can be daunting. Being one uncertain face in a large crowd can discourage a student from asking a question, but questions not asked and answered can leave that student feeling lost and discouraged.

Even when one can follow a lecture’s content in the best of circumstances, it can be hard to just listen and take notes for 75 minutes. I used to make lectures interactive by posing questions to the room, to get students thinking actively about the technical material being presented. But in a large class I find that it is always the same small group of students who respond. Nevertheless I persisted, hoping that if students don’t answer my questions directly they are at least working out the answers in their heads. But colleagues have said to me that studies find this often doesn’t happen. Many students are happy to stay passive and not mentally engage.

Flipped Classrooms?

One frequently proposed remedy to the problem that lectures are ineffective is to employ active learning. This means getting students actively engaged in the learning process, rather than just passively listening. One approach is to have students break into groups to work out problems during lecture. Taking this idea to the extreme leads to flipped classrooms, a teaching style in which students read or watch videos in advance of class, and then work on problems during class.

I have not tried a flipped classroom, but colleagues who have report good experiences. Getting students to do the prep work in advance can be a challenge, so some colleagues incentivize it by having a quiz at the start of every class. Another challenge is getting all students to participate equally; those more passively inclined might be fine to let the others in their group do the work. This can also probably be addressed with the right incentives.

Rather than go the route of a flipped classroom, I have adopted a different style of active learning: in-class clicker quizzes.

Graded, In-class “Clicker” Quizzes

My lecture in 330 is structured around a “traditional” slide presentation in Keynote or Powerpoint. I try very hard to make the slides well-formatted, pedagogically sound, and self-contained, with all of the key ideas present on the slides. That way, students can come back to them later for easy reference and refreshing. This is in contrast to the “Presentation Zen” style in which slides have lots of pictures, requiring the instructor soundtrack to make sense. I record my lectures using Panopto, which is integrated with UMD’s course management system. Panopto is really easy to use and syncs slides and audio, indexing the recording by slide, making it easy to locate a particular topic later.

To make the lecture more “active,” I intersperse multiple-choice quiz questions throughout the lecture, typically around five per 75-minute lecture, each placed after a relevant sub-topic. When we get to one of these questions, I tell the students to come up with what they think is the right answer. They are free (indeed, encouraged) to confer with their neighbors. Once they’ve done so, they each individually submit an answer using an App on their phone, or a physical device called a clicker. Software running on my laptop receives each answer and tallies the results. After a minute or so I close the quiz, with the result that a histogram is displayed on-screen. Then I display the correct answer to the quiz, and everyone can compare it to their own answer, and the histogram. A good example of the spacing and style of clicker questions I use can be found in this lecture on finite automata and regular expressions (but see the other examples, too, in our lecture schedule).

The clicker quiz results of the day are associated with the student’s ID and they are graded for correctness. Ultimately, 5% of a student’s final grade owes to clicker quiz outcomes. To take the pressure of performance off and to allow for questions that I expect students should get wrong (for pedagogical reasons), I drop the lower 20% of quiz outcomes. As such, if we had 100 clicker questions throughout the semester, a student could get 20 of them wrong and still receive full credit for the 5% clicker portion of their final grade. (Last semester, the average student got about 4.25 of the 5 percentage points on offer for clickers.)

At UMD we use Turning Point Clicker software. UMD pays for each student’s license to use it, so it’s no extra cost to them. Turning has a way to embed clicker quizzes inside of Powerpoint slides, but I don’t use it. Rather, I start “on demand” polls using the Turning Point dashboard during class when we reach a quiz, which is formatted just as usual Powerpoint. Doing this avoids needing to install a Powerpoint extension and avoids the negative impact on old slides of Powerpoint software upgrades.

Benefits

I have found that clicker quizzes have several benefits. Most importantly, using them gets all students actively engaged, trying to apply the concepts they have just been taught. Everyone has their own clicker and can anonymously submit an answer, so there is no social pressure against participation.

Unlike flipped classrooms, there is no risk of a lack of preparedness getting in the way of participation, nor is there a problem that shy students let more outgoing ones in a small group do the work. It’s also easy to adapt a traditional lecture-based class to use clickers, whereas a flipped classroom is a significant investment.

Like flipped classrooms, clicker quizzes break up the monotony of long periods of just me talking, creating a mental break before moving on. They are also social events. While people are working out their answer there is a buzz in the class that is really exciting. The benefits of the group-based active approach come out here, as those not sure of the answer first try on their own, but can get help from a neighbor if they are stuck. It’s a lot of fun when the histogram pops up and there in audible “ahh!” or “aww!” as a result. Several students commented in their teaching evaluations this year that they really enjoyed the social aspect of clicker quiz answering.

To get these benefits, I have found that it is important to incentivize participation by grading clicker quiz results. If they are not graded, students will not come to class, and even if they do, they will not participate. This is what a colleague of mine found out when making clicker quiz questions ungraded in 330 last Fall. Grading for correctness, and not just “participation,” is also important, or else students will not be incentivized to actually try to come up with the right answer. They’ll just click “A” every time. My colleague saw this behavior too. Dropping the lower 20% of scores reduces the impact of failure on the student’s grade.

Summary

Large classes in computer science present a significant challenge to the goal of high-quality education. Active learning techniques are helpful overall, but are challenging in large classes, and can require a lot of work to get right. I have found that in-class clicker quizzes strike a nice balance. They bring the benefits of active learning without the large investment of flipping a classroom, and without some other downsides too. I have used clickers for two semesters and I find my lectures now are more fun and engaging, even with large classes (or perhaps because of them), than they were before. Indeed, my teaching evaluation scores are the highest they have ever been (and they were good before).

Of course, other elements of a course beyond the lecture are challenged by large enrollments. I will discuss these — notably student Q&A and grading — in a future post.

Two things. First, in the past, one would argue that if you have many more students, you should have many more teachers. So, there must be something that’s changed in that mindset (why we don’t want many more teachers). Second, unrelated to first, I’d be curious if you asked people that dropped the major after taking 2-3 courses, why they did so, I wonder what people would say. Are the reasons they state problems that should be solved?

We do argue it. That doesn’t mean we get more resources (i.e., funds to pay additional instructional staff). YMMV.

I don’t personally have data about people who drop the major, so I can’t comment with any certainty about that. Anecdotally, I have heard many different reasons.

We want more teachers. So does everyone else (including industry). Supply and demand.

I was in one class that used clickers, Physics 140 with 175 students in the lecture (this was in 2009, so they all had to buy the clicker hardware), but counted it as a participation grade – it didn’t matter whether you got it right. Even so, it helped keep people involved although sometimes people would choose in answers that weren’t one of the choices (choose F when the choices were A,B,C,D).

That’s interesting! So that’s evidence in support of making clicker participation count towards the final grade but not necessarily grading correctness. Will contemplate.

I also tried clickers, and counted it as part of the final grade. But one thing I was worried about was that if you allow students to chat during the quiz questions, then there is an inherent unfairness in the grade — part of your final grade depends on who you happen to sit near. Therefore I prohibited talking during the questions, but I could tell that the students didn’t like that (since everyone else seems to allow talking). Should I just be less concerned about unfairness?

This is an interesting point. I think the benefits of chatting, pedagogically, outweigh the unfairness assuming that (a) you don’t make it count for too much of the final grade, and (b) you make the percentage of “free questions” high enough. For 330 in Spring’18, clickers were 4% of the grade, and we dropped 20 wrong answers out of 113 questions (i.e., the grade was computed as #correct / 93). Looking at the distribution of scores, I could imagine increasing the number of dropped questions to 25 or 30.

I should note that a lack of socialization (or socializing with the wrong people) could harm you in other ways too, e.g., by not studying with friends for the exam or by not chatting about the design of your project. These (in)activities probably have a bigger influence on your grade and are not as easily remedied, e.g., by noticing your scores are suffering and changing seats (which I certainly have seen people do).

I do something very similar to Mike in my class (450 students). However, have two differences in the grading. First, I grade them only on participation, not on correctness. Second, I make the entire grade (5%) ‘redeemable’, which means that if they get a better mark in their final, that will be used. This seems to work pretty well. I use Piazza for this and post the answers after the class, alongside the results of each poll. The responses seem to indicate that most students try hard to get the right answer, so the focus on ‘participation’ rather than correctness does not appear to significantly detract from the seriousness with which the students approach the task. Since the environment is far from ‘sterile’ (the students all have google at their fingertips), I’m wary about viewing this as summative assessment. I see it is formative.

Interesting ideas! Feeling very tempted by this approach.